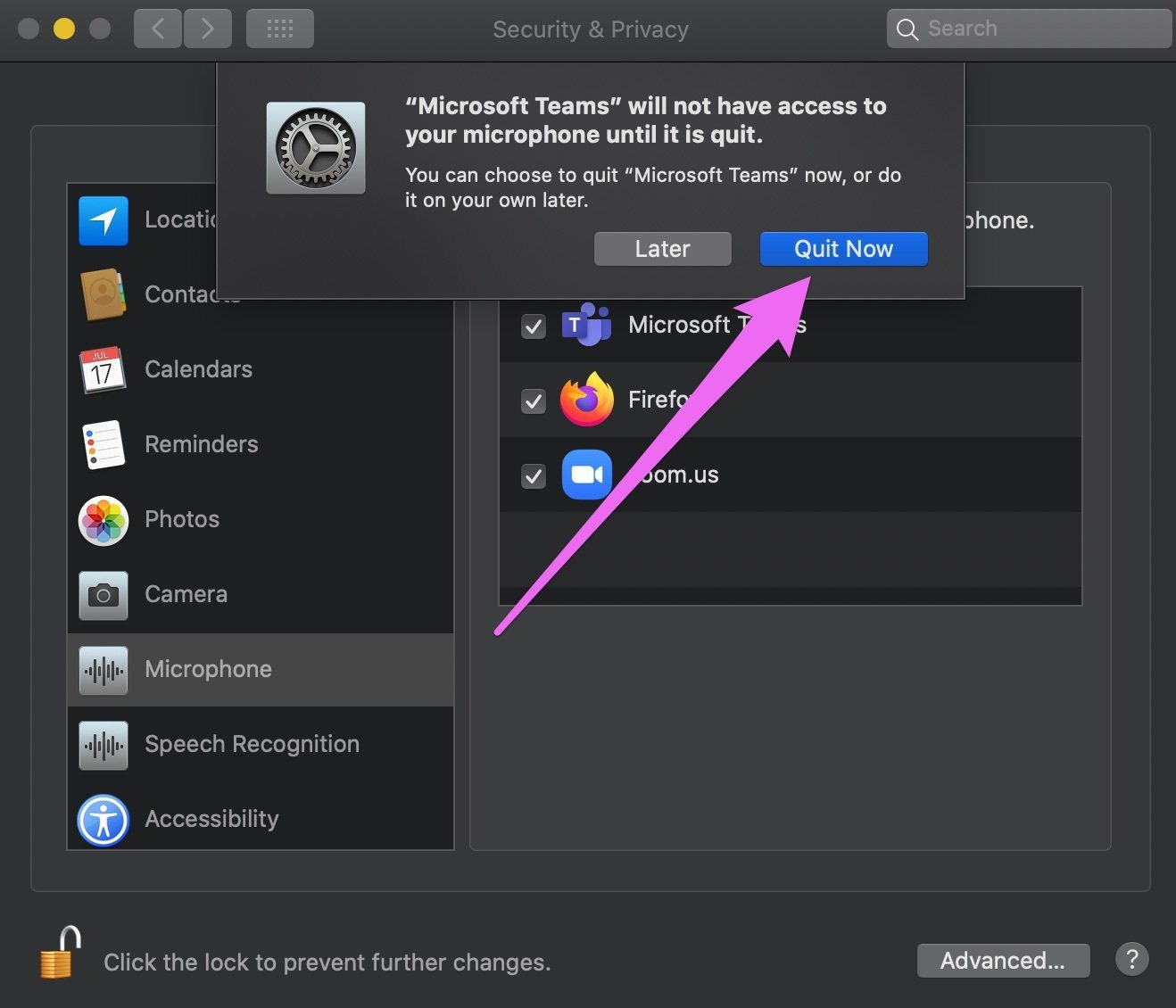

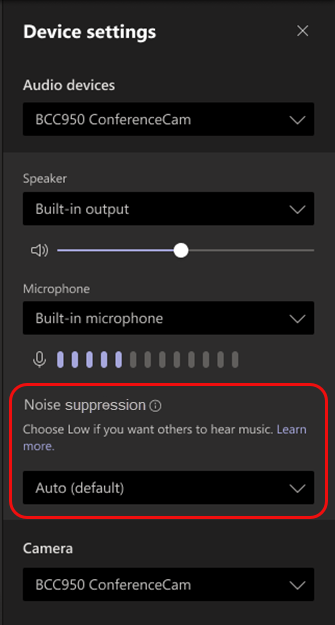

Audio using the feature is claimed to sound no different from conversations in an office.įor video, Microsoft has previously released AI-based video and screen sharing quality optimization breakthroughs for Teams. The feature means that users sound like they’re speaking into a headset microphone, even in a large room where speech and other noise can bounce from wall to wall. On the acoustic side, Teams now use AI to reduce reverberation, improving audio quality from users in rooms with poor acoustic qualities. This directly addresses a common issue with teleconferencing where one person tries to speak simultaneously over another person. The ability allows users to speak and listen simultaneously, allowing for interruptions that make the conversation seem more natural and less choppy. Microsoft says the model goes a step further to improve dialogue over Teams by enabling “full-duplex” sound. The machine learning now used by Microsoft Teams is now said to prevent unwanted echos, a “welcome addition for anyone who had had their train of thought derailed by the sound of their own words coming back at them,” AI and Machine Learning Program Manager Solomiya Branets wrote on the Microsoft Teams blog. On that last in particular, problems often arise from to the proximity of microphones and speakers creating a feedback loop where incoming audio from a speaker is received by a microphone and then rebroadcast. The changes seek to address issues such as background noise, poor acoustics and quality when users are not using a headset. New machine learning and artificial intelligence features in Microsoft Corp.’s Teams promise to improve the sound quality of meetings and calls dramatically, even in challenging situations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed